Datasets:

TerraMesh

A planetary‑scale, multimodal analysis‑ready dataset for Earth‑Observation foundation models: TerraMesh merges data from Sentinel‑1 SAR, Sentinel‑2 optical, Copernicus DEM, NDVI, and land‑cover sources into more than 9 million co‑registered patches ready for large‑scale representation learning. You find more information about the data sampling and preprocessing in our paper: TerraMesh: A Planetary Mosaic of Multimodal Earth Observation Data.

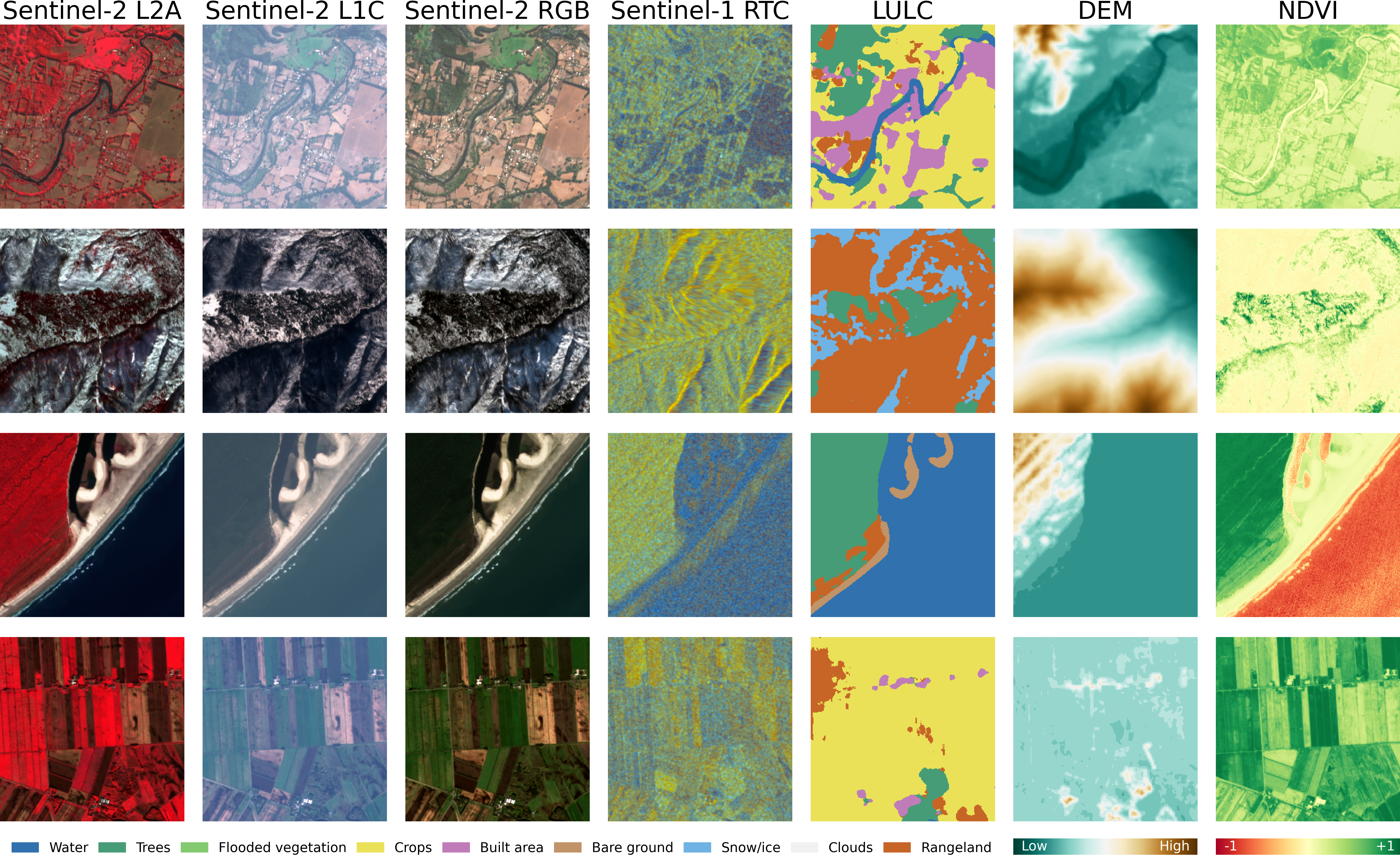

Samples from the TerraMesh dataset with seven spatiotemporal aligned modalities. Sentinel-2 L2A uses IRRG pseudo-coloring and Sentinel-1 RTC is visualized in db scale as VH-VV-VV/VH. Copernicus DEM is scaled based on the image value range with an additional 10 meter buffer to highlight flat scenes.

News

- March 6, 2026: We provide metadata files with an better overview of all samples.

- March 5, 2026: We fixed a temporal alignment issue in the Sentinel-1 GRD data due to an error in the source dataset SSL4EO-S12 (see issue).

- August 1, 2025: TerraMesh is released!

Dataset organisation

The archive ships two top‑level splits train/ and val/, each holding one folder per modality. {train,val}_metadata.parquet include an overview of the samples and terramesh.py includes code for data loading, see Usage.

TerraMesh

├── train

│ ├── DEM

│ ├── LULC

│ ├── NDVI

│ ├── S1GRD

│ ├── S1RTC

│ ├── S2L1C

│ ├── S2L2A

│ └── S2RGB

├── val

│ ├── DEM

│ └── ...

├── train_metadata.parquet

├── val_metadata.parquet

└── terramesh.py

Each folder includes up to 889 shard files, containing up to 10240 samples each. Samples from MajorTom-Core are stored in shards with the pattern majortom_{split}_{id}.tar while shards with SSL4EO-S12 samples start with ssl4eos12_.

Samples are stored as Zarr Zip files which can be loaded with zarr (Version <= 2.18) or xarray.load_zarr(). Each sample location includes seven modalities that share the same shard and sample name. Note that each sample only inludes one Sentinel-1 version (S1GRD or S1RTC) because of different processing versions in the source datasets.

Each Zarr file includes aligned metadata as shown by this S1GRD example from sample ssl4eos12_val_0080385.zarr.zip:

<xarray.Dataset> Size: 283kB

Dimensions: (band: 2, time: 1, y: 264, x: 264)

Coordinates:

* band (band) <U2 16B "vv" "vh"

sample <U9 36B "0194630_1"

spatial_ref int64 8B 0

* time (time) datetime64[ns] 8B 2020-05-03T02:07:17

* x (x) float64 2kB 6.004e+05 6.004e+05 ... 6.03e+05 6.03e+05

* y (y) float64 2kB 4.275e+06 4.275e+06 ... 4.273e+06 4.273e+06

Data variables:

bands (time, band, y, x) float16 279kB -9.461 -10.77 ... -16.67

center_lat float64 8B 38.61

center_lon float64 8B -121.8

crs int64 8B 32610

file_id (time) <U67 268B "S1A_IW_GRDH_1SDV_20201105T020809_20201105T...

Sentinel-2 modalities and LULC additionally provide a cloud_mask as additional metadata.

Description

TerraMesh fuses complementary optical, radar, topographic and thematic layers into pixel‑aligned 10 m cubes, allowing models to learn joint representations of land cover, vegetation dynamics and surface structure at planetary scale. The dataset is globally distributed and covers multiple years.

Performance evaluation

TerraMesh was used to pre-train TerraMind-B. On the six evaluated segmentation tasks from PANGAEA bench, TerraMind‑B reaches an average mIoU of 66.6%, the best overall score with an average rank of 2.33. This amounts to roughly a 3pp improvement over the next‑best open model (CROMA), underscoring the benefits of pre‑training on TerraMesh. Compared to an ablation model pre-trained only on SSL4EO-S12 locations TerraMind-B performs overall 1pp better with better global generalization on more remote tasks like CTM-SS. More details in our paper.

Usage

Setup

Install the required packages with:

pip install huggingface_hub webdataset torch numpy albumentations fsspec braceexpand zarr==2.18.0 numcodecs==0.15.1

Important! The dataset was created using zarr==2.18.0 and numcodecs==0.15.1. Zarr 3.0 has backwards compatibility issues, and Zarr 2.18 is incompatible with NumCodecs >= 0.16.

Download

You can download the dataset with the Hugging Face CLI tool. Please note that the full dataset requires 17TB or storage.

hf download ibm-esa-geospatial/TerraMesh --repo-type dataset --local-dir data/TerraMesh

If you like to download only a subset of the data, you can specify it with --include.

# Only download val data

hf download ibm-esa-geospatial/TerraMesh --repo-type dataset --include "val/*" --local-dir data/TerraMesh

# Only download a single modality (e.g., S2L2A)

hf download ibm-esa-geospatial/TerraMesh --repo-type dataset --include "*/S2L2A/*" --local-dir data/TerraMesh

It is recommend to be be logged in with the Hugging Face cli tool (check with hf auth whoami or run hf auth login) to avoild hitting download limits and using a reduced number of workers (--max-worker 4).

When the download fails, just rerun the command to autoresume it.

Data loader

We provide the data loading code in terramesh.py which is downloaded together with the dataset. For development use streaming, you can download the file via this link or with:

wget https://huggingface.co/datasets/ibm-esa-geospatial/TerraMesh/resolve/main/terramesh.py

You can use the build_terramesh_dataset function to initialize a dataset, which uses the WebDataset package to load samples from the shard files. You can stream the data from Hugging Face using the urls or download the full dataset and pass a local path (e.g, data/TerraMesh/).

from terramesh import build_terramesh_dataset

from torch.utils.data import DataLoader

# If you only pass one modality, the modality is loaded with the "image" key

dataset = build_terramesh_dataset(

path="https://huggingface.co/datasets/ibm-esa-geospatial/TerraMesh/resolve/main/", # Streaming or local path

modalities=["S2L2A"],

split="val",

shuffle=False, # Set false for split="val"

batch_size=8

)

# Batch keys: ["__key__", "__url__", "image"]

# If you pass multiple modalities, the modalities are returned using the modality names as keys

dataset = build_terramesh_dataset(

path="https://huggingface.co/datasets/ibm-esa-geospatial/TerraMesh/resolve/main/", # Streaming or local path

modalities=["S2L2A", "S2L1C", "S2RGB", "S1GRD", "S1RTC", "DEM", "NDVI", "LULC"],

shuffle=False, # Set false for split="val"

split="val",

batch_size=8

)

# Set batch size to None because batching is handled by WebDataset.

dataloader = DataLoader(dataset, batch_size=None, num_workers=4, persistent_workers=True, prefetch_factor=1)

# Iterate over the dataloader

for batch in dataloader:

print("Batch keys:", list(batch.keys()))

# Batch keys: ["__key__", "__url__", "S2L2A", "S2L1C", "S2RGB", "S1RTC", "DEM", "NDVI", "LULC"]

# Because S1RTC and S1GRD are not present for all samples, each batch only includes one S1 version.

print("Data shape:", batch["S2L2A"].shape)

# Data shape: torch.Size([8, 12, 264, 264]

# Dimensions [batch, channel, h, w]. The code removes the time dim from the source data.

break

Data transform

We provide some additional code for wrapping albumentations transform functions.

We recommend albumentations because parameters are shared between all image modalities (e.g., same random crop).

However, it requires some code wrapping to bring the data into the expected shape.

import albumentations as A

from albumentations.pytorch import ToTensorV2

from terramesh import build_terramesh_dataset, Transpose, MultimodalTransforms, MultimodalNormalize, statistics

# Define all image modalities

modalities = ["S2L2A", "S2L1C", "S2RGB", "S1GRD", "S1RTC", "DEM", "NDVI", "LULC"]

# Define multimodal transform function that converts the data into the expected shape from albumentations

val_transform = MultimodalTransforms(

transforms=A.Compose([ # We use albumentations because of the shared transform between image modalities

Transpose([1, 2, 0]), # Convert data to channel last (expected shape from albumentations)

MultimodalNormalize(mean=statistics["mean"], std=statistics["std"]),

A.CenterCrop(224, 224), # Use center crop in val split

# A.RandomCrop(224, 224), # Use random crop in train split

# A.D4(), # Optionally, use random flipping and rotation for the train split

ToTensorV2(), # Convert to tensor and back to channel first

],

is_check_shapes=False, # Not needed because of aligned data in TerraMesh

additional_targets={m: "image" for m in modalities}

),

non_image_modalities=["__key__", "__url__"], # Additional non-image keys

)

dataset = build_terramesh_dataset(

path="https://huggingface.co/datasets/ibm-esa-geospatial/TerraMesh/resolve/main/",

modalities=modalities,

split="val",

transform=val_transform,

batch_size=8,

)

If you only use a single modality, you don't need to specify additional_targets. You need to change the normalization to:

MultimodalNormalize(

mean={"image": statistics["mean"]["<modality>"]},

std={"image": statistics["std"]["<modality>"]}

),

Returning metadata

You can pass return_metadata=True to build_terramesh_dataset() to load center longitude and latitude, timestamps, and the S2 cloud mask as additional metadata.

The resulting batch keys include: ["__key__", "__url__", "S2L2A", "S1RTC", ..., "center_lon", "center_lat", "cloud_mask", "time_S2L2A", "time_S1RTC", ...].

Therefore, you need to update the transform if you use one:

val_transform = MultimodalTransforms(

transforms=A.Compose([...],

additional_targets={m: "image" for m in modalities + ["cloud_mask"]}

),

non_image_modalities=["__key__", "__url__", "center_lon", "center_lat"] + ["time_" + m for m in modalities]

)

For a single modality dataset, "time" does not have a suffix and the following changes for the transform are required:

val_transform = MultimodalTransforms(

transforms=A.Compose([...],

additional_targets={"cloud_mask": "image"}

),

non_image_modalities=["__key__", "__url__", "center_lon", "center_lat", "time"]

)

Note that center points are not updated when random crop is used. The cloud mask provides the classes land (0), water (1), snow (2), thin cloud (3), thick cloud (4), cloud shadow (5), and no data (6). DEM does not return a time value while LULC uses the S2 timestamp because of the augmentation using the S2 cloud and ice mask. Time values are returned as integer values but can be converted back to datetime with

batch["time_S2L2A"].numpy().astype("datetime64[ns]")

Metadata overview

We provide metadata Parquet files per split with the following columns:

tar majortom_shard_000001.tar

zarr majortom_val_0000001.zarr.zip

sample_id 47U_1147R_0_0

split val

center_lon 103.31613

center_lat 4.303143

crs 32648

bounds [311805.33281692077, 474521.6412456293, 314445...

geometry POLYGON ((103.32804674037381 4.291232460225369...

S2_time 2019-08-04 03:15:49

S1_time 2019-08-10 22:55:42

cloud_cover 0.0

Loading single samples

In case you want to load a single sample, you can do via the following code. Note the tar and zarr columns in the provided metadata files.

import io

import zarr

import tarfile

import zipfile

import xarray as xr

tar_path = "https://huggingface.co/datasets/ibm-esa-geospatial/TerraMesh/resolve/main/val/S2RGB/majortom_shard_000001.tar"

zarr_file = "majortom_val_0000100.zarr.zip"

# Stream the tar from HF

with requests.get(tar_url, stream=True) as r:

with tarfile.open(fileobj=r.raw, mode="r|*") as tar:

for member in tqdm.tqdm(tar): # Iterate over all files in tar

if member.name == zarr_file:

print(f"Loading {member.name}")

fileobj = tar.extractfile(member)

zip_bytes = io.BytesIO(fileobj.read())

# Extract zip contents into a MemoryStore

mem_store = zarr.storage.MemoryStore()

with zipfile.ZipFile(zip_bytes) as zf:

for name in zf.namelist():

mem_store[name] = zf.read(name)

ds = xr.open_zarr(mem_store)

break

Because tar files have an O(N) lookup, this can take a while depending on the position of the file in the tar. Alternatively, save member.offset and member.size for all samples in a metadata file and download only the required bytes.

# Load byte offset and size from metadata file (not provided)

offset = int(metadata.at[zarr_file, 'offset'])

size = int(metadata.at[zarr_file, 'size'])

outfile = "tmp.zarr.zip",

headers = {"Range": f"bytes={offset}-{offset + size - 1}"}

with requests.get(tar_url, headers=headers, stream=True) as r:

with open(outfile, "wb") as f:

for chunk in r.iter_content(1024 * 1024):

f.write(chunk)

ds = xr.open_zarr(outfile)

If you have any issues with data loading, please create a discussion in the community tab and tag @blumenstiel.

Citation

If you use TerraMesh, please cite:

@article{blumenstiel2025terramesh,

title={Terramesh: A planetary mosaic of multimodal earth observation data},

author={Blumenstiel, Benedikt and Fraccaro, Paolo and Marsocci, Valerio and Jakubik, Johannes and Maurogiovanni, Stefano and Czerkawski, Mikolaj and Sedona, Rocco and Cavallaro, Gabriele and Brunschwiler, Thomas and Bernabe-Moreno, Juan and others},

journal={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

year={2025},

}

License

TerraMesh is released under the Creative Commons Attribution‑ShareAlike 4.0 (CC‑BY‑SA‑4.0) license.

Acknowledgements

TerraMesh is part of the FAST‑EO project funded by the European Space Agency Φ‑Lab (contract #4000143501/23/I‑DT).

The satellite data (S2L1C, S2L2A, S1GRD, S1RTC) is sourced from the SSL4EO‑S12 v1.1 (CC-BY-4.0) and MajorTOM‑Core (CC-BY-SA-4.0) datasets.

The LULC data is provided by ESRI, Impact Observatory, and Microsoft (CC-BY-4.0).

The cloud masks used for augmenting the LULC maps are provided as metadata and are produced using the SEnSeIv2 model.

The DEM data is produced using Copernicus WorldDEM-30 © DLR e.V. 2010-2014 and © Airbus Defence and Space GmbH 2014-2018 provided under COPERNICUS by the European Union and ESA; all rights reserved

- Downloads last month

- 1,933